Financial crime compliance is broken in ways that don't show up on dashboards. The alerts are getting cleared. The SARs are being filed. But somewhere between the rules engine and the analyst queue, your best investigators are burning out on work that should never have reached their desks — while the cases that actually need them wait.

The Crisis Hidden in Plain Sight

The numbers in financial crime compliance have become almost abstract in their scale. $206 billion spent globally each year on AML and fraud programs. $3 trillion in illicit funds still flowing through the financial system annually, according to Nasdaq's 2024 Global Financial Crime Report. Regulators issued $4.5 billion in fines in 2024 alone — the single largest category being transaction monitoring failures.

And yet the core operational problem isn't detection. Detection has improved dramatically over the last decade. The problem is what happens after detection — the investigation layer that sits between an alert and a defensible decision, and that has remained almost entirely manual since the first AML rule was ever written.

The math is brutal and compounding. Transaction volumes grow with every new product, every new market, every new payment rail. Alert queues grow with them. Regulators have noticed — recent enforcement actions have specifically cited institutions for failing to manage alert queues effectively, not just for missing genuine threats. The operational pressure and the regulatory pressure now point in the same direction at the same time.

Hiring more analysts is not the answer. Experienced AML investigators take years to develop. And when they spend the majority of their working hours clearing alerts that resolve to nothing, you don't just have an efficiency problem. You have a retention problem, a quality problem, and a real-risk-detection problem — because alert fatigue is itself a compliance risk. Regulators have documented cases where genuine suspicious activity was missed because analysts were overwhelmed by false positive volume.

Four Operational Failures That AI Must Actually Fix

Before talking about solutions, it is worth being specific about the real pain. The compliance technology industry has a habit of solving problems that are easy to demo rather than the ones that are hard to live with. These are the four failures that Flagright's AI suite was built against.

01. The Backlog Spiral

Alerts accumulate faster than teams can clear them. Each week of backlog creates regulatory exposure and pulls resources from current-week queues, compounding the deficit. A team of 10 analysts and 5,000 weekly alerts is structurally underwater — permanently. Hiring adds cost but not a structural fix.

02. The Swivel-Chair Workflow

A single alert investigation requires pulling data from 3–5 separate systems: KYC databases, transaction logs, sanctions lists, adverse media tools, case history. Analysts spend more time navigating tools than exercising judgment. Documentation requirements add another layer. The tool is the bottleneck, not the analyst.

03. One-Size-Fits-All AI

Early compliance AI applied identical investigation logic to every alert. A sanctions hit on a high-net-worth private banking client and a routine PEP flag for a neobank customer received the same treatment. Institutions had no way to match AI behavior to their SOPs — so human review remained mandatory for everything.

04. The Trust Deficit

Compliance leaders and examiners alike have been burned by AI that cannot explain itself. When a system cannot trace its reasoning from alert to disposition in terms a regulator can follow, it creates governance risk rather than reducing it. One inexplicable decision can set an institution's AI program back by years.

These four failures are not separate problems. They are one system failure: the investigation layer was never designed for the volume, variety, and auditability demands of modern compliance. Incremental fixes have reached their ceiling. The only path forward is an investigation layer built from scratch for the demands of today's environment.

What AI-Native Compliance Actually Requires

"AI-first" has become the most overused phrase in compliance technology. In operational terms, AI-native architecture in this space requires four things that most platforms do not have simultaneously:

- Investigation autonomy, not just summarization. AI that tells an analyst what an alert says is decision support. AI that executes the investigation — gathers evidence, applies SOPs, reaches a reasoned disposition — is investigation replacement. Only the latter reduces queue depth. Only the latter solves the structural problem.

- Configurability to match institutional procedure. Every institution has different SOPs, risk appetites, and regulatory environments. An AI system that cannot be configured to your procedures will always require a human to bridge the gap between what the AI did and what your compliance program requires.

- Full auditability at the individual decision level. Regulators do not evaluate AI accuracy in aggregate. They evaluate individual decisions. If a system cannot explain exactly why it dispositioned a specific alert — citing the steps it took, the sources it consulted, and the reasoning it applied — it cannot operate in a regulated environment at scale.

- Proof of performance before deployment. "Trust us, it works" is not an AI governance framework. Institutions need to validate AI performance on their own historical data before any live alert is touched. Without this, AI deployment requires a leap of faith that risk-averse compliance teams — quite reasonably — cannot take.

It only takes one hallucinated result for an institution to completely disregard AI. Trust is not a feature; it is the architecture. — Madhu Nadig, Co-Founder & CTO, Flagright

Flagright's AI Suite: Five Capabilities That Change the Equation

1. Autonomous Investigation — AI That Does the Work, Not Just the Summary

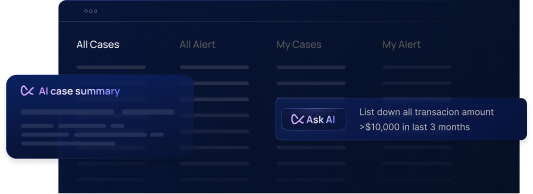

AI Forensics (AIF®) is Flagright's purpose-built agentic investigation engine. Unlike AI tools that generate summaries for analysts to act on, AIF executes the investigation itself — following your institution's Standard Operating Procedures step by step, gathering evidence from every relevant data source, and reaching a defensible disposition in seconds rather than minutes.

The distinction matters operationally. A summarization tool reduces the time an analyst spends reading. An investigation agent eliminates the investigation entirely for a defined category of alerts. Only the latter reduces queue depth. Only the latter solves the backlog spiral.

Assisted Mode

Agent pre-investigates every alert before it reaches the queue. Analyst reviews a completed investigation and makes the final call. Investigation time: 5–15 min → under 60 seconds.

Autopilot Mode

For defined low-risk, high-volume queues, agents investigate and close autonomously. Human oversight moves to governance level: sampling, monitoring, exception review — not alert-by-alert sign-off.

2. Rule-Level Configurability That Matches Your Program Exactly

AIF EVO introduces a fundamental shift: AI Agent templates assigned at the detection rule level. This means the investigation workflow is not just configurable — it is configurable per rule, so the AI behaves differently based on the specific risk profile of each alert type it investigates.

Your "OFAC SDN — High-Risk Jurisdiction" rule can use a conservative, multi-step investigation requiring manual analyst initiation. Your "Retail PEP Screening — Low Value" rule can run an efficient, auto-investigating template for customers below a defined risk score. Different rules, different procedures, different risk tolerance — the AI matches all of it. This is the difference between an AI system that adapts to your institution and one that asks your institution to adapt to it.

The configuration builder is fully no-code. Institutions upload their existing SOPs, the platform auto-generates an agent workflow mapping to those procedures step by step, and compliance teams review and adjust before anything goes live. First agent: typically deployed in hours.

3. Simulation Engine: Prove It Before You Deploy It

The most common objection to AI in compliance is: "How do I know it will work for my institution?" Industry benchmark statistics from a vendor's other customers don't answer this. Flagright's Simulation Engine does — by replaying any AI Agent configuration against your own historical alert data, comparing AI dispositions directly against what your analysts actually decided on those same cases.

This is not a proof-of-concept pilot. It is a standard part of the deployment process, included in AIF EVO at no additional cost. Five full simulations per institution. Before a single live alert is touched.

What Simulation Measures

Agreement rate vs. analyst decisions · False positive clearance rate · False negative / missed risk rate · Projected time savings · Override risk · Per-alert drill-down with full step-by-step narrative

What Simulation Enables

Side-by-side comparison of conservative vs. efficient configs · Version comparison before updates go live · A/B testing with and without internet search · Regulatory-ready governance evidence pre-deployment

Compliance leaders can show examiners not just that the AI is performing well in production, but that they validated performance on their own data before going live. That is a fundamentally different regulatory conversation than "our vendor's accuracy rate is 99%."

4. Web Research Built Into Investigation

One of the most persistent friction points in compliance investigation — particularly for sanctions and PEP screening — is the data gap. A customer matches a sanctions entry by name, but the institution only has name and address on file. The analyst's next step is manual research: government registers, parliamentary records, official sanctions databases, news archives. Time-consuming. Done inconsistently. And for lower-priority alerts, often skipped entirely.

AIF EVO gives AI Forensics agents the same research capability — as an integrated investigation step, with full governance controls on which sources are permissible per rule type.

Every search is governed, logged, and auditable. Source domains are configurable per rule type. All URLs accessed are cited in the investigation record. Conflicting or ambiguous results trigger manual review rather than automated clearance. For PEP screening cases with limited identifier data — one of the industry's most persistent unresolved problems — internet research reduces the percentage of cases requiring client follow-up by an estimated 50–70%.

5. Compliance-Grade Trust: Explainability That Holds Up in the Examination Room

The trust problem in compliance AI is architectural, not philosophical. An AI system that produces accurate results but cannot explain individual decisions in terms an examiner can follow is not a compliance control. It is a governance liability. Flagright's approach operates at four structural levels:

Hallucination prevention by constraint. Agents are grounded in customer-defined SOPs and validated checklists. If the AI cannot support a finding with actual evidence from sources it has accessed, it does not make the finding. This is a hard architectural rule — not a system prompt instruction.

- Full reasoning chains per alert. Every AIF investigation produces a complete, human-readable audit trail: every step executed, every data source consulted, every web search and URL accessed, and the reasoning connecting evidence to disposition. Every individual case is fully traceable end-to-end.

- Version-controlled agent governance. Every configuration change creates a new version with a full audit trail and simulation results. Institutions can show examiners the performance history of every version, roll back to any prior configuration, and demonstrate that changes were validated before deployment.

- Human-in-the-loop as the structural default. Autonomous operation is earned through demonstrated simulation performance and expanded deliberately — never granted as a default. AI recommends; humans decide. This is the mechanism by which regulatory trust is built incrementally, not assumed.

AI Across the Full Financial Crime Lifecycle

Flagright's AI suite spans every stage of detection-to-disposition on a single, integrated infrastructure — with rules and AI sharing the same back-testing environment so performance is measured consistently across both layers.

- Transaction Monitoring (AIF4TM). Agentic investigation of transaction monitoring alerts — structuring patterns, rapid fund movements, cross-border layering, smurfing — following institution-configured investigation templates per detection rule. Rules and AI are architecturally integrated, not siloed.

- Sanctions, PEP & Adverse Media Screening (AIF4S). Purpose-built agents across OFAC, HM Treasury, EU, and other regimes, plus all three PEP political exposure levels and adverse media. Web research resolves ambiguous name matches without client follow-up. Adverse media source credibility is assessed and documented automatically.

- Customer Risk Scoring. Dynamic risk scoring updating in real time on transaction behavior, screening outcomes, and case history — feeding directly into AIF template configuration to calibrate autonomy thresholds by customer segment.

- Case Management & SAR Preparation. Every AIF investigation automatically generates a complete case record — full reasoning chain, evidence citations, source URLs — ready for escalation, regulatory filing, or examiner review without additional documentation effort.

What This Looks Like in Practice

Architecture arguments are necessary context. Compliance leaders make decisions on outcomes. Here is what Flagright's AI suite has delivered across payments, crypto, neobanking, and cross-border remittance.

B4B Payments · European Payment Service Provider

"We were generating more alerts than our team could realistically work through at the standard our compliance program required. The backlog was growing every week and hiring wasn't keeping pace with transaction volume growth."

- Investigation time: 5–15 min → under 60 sec

- Analyst capacity effectively 5× multiplied

- Backlog cleared without additional headcount

- Full audit trail on every AIF decision

Sciopay · Cross-Border Payments Platform

"Multi-jurisdiction sanctions screening generated high volumes of name-match alerts requiring manual research to resolve. Analysts were spending hours per day on cases that almost always closed as false positives — work that kept them from higher-risk investigations."

- AIF4S deployed for sanctions + PEP investigation

- Web research resolving ambiguous matches autonomously

- Manual L1 research hours per day: near-zero

- Client follow-up rate significantly reduced

Localcoin · Crypto Exchange

"Crypto transaction monitoring generates high velocity and novel patterns with regulatory scrutiny that makes every disposition feel consequential. We needed AI that could document its reasoning in a way we could actually present to a regulator — not just a recommendation with no traceable logic."

- AIF4TM with custom investigation templates per rule

- Full step-by-step reasoning chain on every disposition

- Regulator-ready audit trail on all AI-investigated alerts

- Analyst capacity freed for complex escalation cases

The Institutions That Move First Win Twice

There is a compounding dynamic in compliance AI adoption that most institutions have not fully reckoned with. Institutions deploying AI forensics now are not just solving today's backlog. They are building the institutional muscle, the regulatory track record, and the governance infrastructure that will allow them to responsibly expand automation as their programs mature.

Institutions that wait are building larger backlogs, deeper analyst burnout, and a longer runway to the regulatory confidence that makes autonomous operation possible. The gap between early movers and late adopters in this space widens every quarter — because the volume problem does not pause while institutions evaluate.

What Flagright's AI Suite Means for Your Program

Investigation backlogs cleared without headcount expansion. Analyst time reallocated to genuine risk. AI configuration validated on your own historical data before it touches a live alert. Every disposition explainable to an examiner, down to the individual step and URL consulted. And a governance framework that expands with your regulatory confidence — not one that requires a leap of faith to deploy.

The future of financial crime compliance is not more analysts doing the same work faster. It is AI executing the procedural investigation work that should never have consumed experienced analyst time, so that human expertise can be focused precisely where it matters: the cases that are genuinely ambiguous, genuinely complex, and genuinely consequential.

.svg)