In an increasingly interconnected world where financial systems and advanced technologies are woven together, the era of digital finance is not merely upon us; it has permeated almost every aspect of the industry. As a result, the field of financial compliance has expanded and evolved, embracing the advancements AI and machine learning have brought, but also encountering novel challenges that demand fresh perspectives.

One such challenge revolves around the issue of algorithmic bias and its implications, a subject that's garnering significant attention from various stakeholders, from technology developers to regulators, and most importantly, Compliance Officers. As financial institutions increasingly rely on AI systems for various operations, the importance of understanding and addressing algorithmic bias becomes more pronounced. Such biases, intentional or otherwise, have the potential to skew data interpretation, distort decision-making processes, and ultimately lead to financial and reputational harm.

The central role of compliance officers in the face of growing AI integration is crucial. The onus falls on them to navigate this new landscape, ensuring that the benefits of AI and machine learning are maximized while the risks, particularly those posed by algorithmic bias, are minimized.

In this article, we will embark on a journey to demystify the concept of algorithmic bias, explore its importance for compliance officers, and provide a comprehensive understanding of the topic. From unpacking what algorithmic bias is, examining its various types, discussing the evolving role of compliance officers in the digital age, to exploring ten key reasons why understanding algorithmic bias is crucial for compliance officers, we aim to cover the subject in depth. We will conclude with insights into how advanced platforms like Flagright can be a strong ally in navigating these challenges.

As we move forward, let us remember that knowledge is the first step in fostering a fair, unbiased, and trustworthy AI-driven financial ecosystem, and compliance officers hold the key to that door. So, let's turn the key and step in.

Unpacking algorithmic bias

In the context of artificial intelligence and machine learning, algorithmic bias refers to systematic errors in the output of an algorithm that create unfair outcomes, such as privileging one group of users over others. To effectively mitigate the effects of algorithmic bias, it's essential to understand the types of bias and how they infiltrate into the financial systems.

There are primarily four types of algorithmic bias:

Pre-existing bias

This type of bias originates from underlying social prejudices. If an algorithm is trained on historical financial data that reflects biased human decisions, it can learn and replicate those biases. For instance, if a loan approval algorithm is trained on data where a specific racial group has been historically disadvantaged, the algorithm might perpetuate this bias.

Technical bias

This form of bias occurs due to technical constraints or decisions made during the development of the algorithm. For instance, if an algorithm designed to detect fraudulent transactions only considers certain types of transactions while ignoring others, it could result in biased output.

Emergent bias

This bias evolves as an algorithm interacts with users. For instance, an algorithm that recommends financial products might lead to a feedback loop where popular products get recommended more often, disadvantageous to lesser-known or new financial products.

Confirmation bias

This type of bias can occur if an algorithm is deliberately programmed to confirm the existing beliefs or hypotheses of the developer, leading to a biased model.

Bias infiltrates financial systems when these biased algorithms are used in decision-making processes. For example, loan approval algorithms might discriminate against certain racial groups, or credit scoring algorithms might disadvantage people from certain geographical locations. Algorithmic bias in financial systems can lead to significant harm, including unfair practices, customer dissatisfaction, and even regulatory non-compliance.

There are numerous real-world examples of algorithmic bias causing significant issues. For instance, Amazon scrapped its AI recruitment tool because it was biased against women. In another case, a study found racial discrimination in algorithms used for healthcare management in the United States, showing that black people were less likely than white people to be referred to programs that aim to improve care for patients with complex medical needs.

Understanding these types of bias and how they can seep into financial systems highlights the potential risks that algorithmic bias poses to the financial industry. It also underscores the responsibility of compliance officers to ensure that the use of AI and machine learning in financial processes is fair, transparent, and equitable.

The evolving role of compliance officers in the digital age

In the financial industry, compliance officers serve as the guardians of integrity and ethical conduct. Traditionally, their roles involved ensuring that an organization adheres to internal policies and external regulatory requirements, investigating irregularities, educating employees on compliance protocols, and acting as the point of contact for regulatory bodies.

However, with the digital revolution sweeping across industries, the role of compliance officers is rapidly evolving. They are now finding themselves at the intersection of financial operations and technology, particularly AI and machine learning, with new responsibilities and challenges.

The growing influence of AI and machine learning in compliance processes has been profound. AI tools are now being used for tasks such as identifying suspicious transactions, monitoring regulatory changes, and automating routine compliance tasks. These advanced technologies have enhanced efficiency, reduced human errors, and allowed for more proactive risk management. They have also allowed compliance officers to focus on more strategic tasks, such as formulating compliance strategies, shaping ethical conduct, and leading digital transformation.

However, the integration of AI also brings new challenges, such as algorithmic bias. As such, it has become crucial for compliance officers to understand and mitigate this bias. When AI systems produce biased results, they can lead to unfair practices, customer dissatisfaction, and even regulatory breaches, all of which can result in financial and reputational damage.

Compliance officers, therefore, must develop new skills and knowledge, including a thorough understanding of AI and machine learning, their operational principles, and the potential sources of bias. With this knowledge, they can effectively evaluate AI systems, ensure that they are used responsibly, and develop strategies to mitigate potential bias. They should also be involved in the development and testing phases of these systems, providing valuable input from a compliance perspective.

Moreover, compliance officers need to keep pace with the emerging regulatory landscape surrounding the use of AI in finance. They need to ensure that their organizations' AI systems meet these regulatory requirements, which may involve demonstrating the fairness and transparency of these systems to regulators.

In this context, compliance officers have a unique opportunity to lead their organizations in the responsible use of AI, ensuring not only regulatory compliance but also fairness and ethical conduct. They can advocate for transparency, accountability, and inclusivity in AI, shaping the future of digital finance. This emerging role places compliance officers at the forefront of the digital transformation in finance, giving them a central role in navigating the challenges and seizing the opportunities that this transformation presents.

10 reasons understanding algorithmic bias is critical for compliance officers

Understanding algorithmic bias is more than just a precautionary step for compliance officers—it is a pivotal factor in determining the success of AI-driven financial systems. Here are ten reasons why this understanding is critical:

1. Ensuring fairness

Algorithms can unintentionally perpetuate and exacerbate historical biases and disparities. Compliance officers equipped with the knowledge of algorithmic bias can help ensure fair treatment for all customers by detecting and mitigating these biases.

2. Building trust in AI systems

Trust is a foundational element for the acceptance of AI among both customers and regulators. For brokerages and trusts, addressing algorithmic bias allows compliance officers to enhance the perceived credibility and reliability of AI systems, fostering stronger trust in these technologies.

3. Regulatory compliance

As AI gains traction in the financial industry, regulatory bodies are introducing rules to ensure fairness and transparency. Understanding and mitigating algorithmic bias can help compliance officers ensure that their AI systems align with these regulatory requirements, thereby avoiding non-compliance penalties.

4. Customer satisfaction

Perceived bias can significantly impact customer satisfaction. By tackling algorithmic bias, compliance officers can improve customer experiences and foster long-term customer relationships and loyalty.

5. Protecting brand reputation

Instances of unchecked bias can harm a company's reputation and customer trust. Compliance officers can play a crucial role in preventing such instances, thereby safeguarding the company's brand image.

6. Reducing litigation risk

Algorithmic bias can lead to legal implications, such as lawsuits for discriminatory practices. Compliance officers who understand these risks can put measures in place to reduce potential litigation.

7. Enhancing accuracy

Bias can distort the results produced by AI systems. By addressing bias, compliance officers can help improve the accuracy of AI systems, ensuring that decisions are made based on reliable information.

8. Improving decision-making

Compliance officers play a central role in organizational decision-making. Understanding algorithmic bias can inform more effective, risk-aware decisions, especially in the use of AI and machine learning tools.

9. Ensuring ethical standards

Addressing bias is a crucial part of upholding ethical standards in AI and financial operations. By actively addressing algorithmic bias, compliance officers can foster an ethical organizational culture that aligns with the values of fairness, equity, and justice.

10. Promoting long-term sustainability

Unchecked algorithmic bias can jeopardize the long-term acceptance and sustainability of AI in finance. Compliance officers need to be proactive in understanding and addressing bias for the longevity and success of AI in the financial industry.

In essence, understanding and addressing algorithmic bias is not an option for compliance officers—it's a necessity. As they navigate the increasingly digital financial landscape, their grasp of these issues will play a crucial role in shaping an equitable, compliant, and prosperous future for their organizations.

Navigating the complex world of compliance with the right tools

In the face of growing complexity in the regulatory landscape, coupled with the integration of AI and machine learning in financial systems, the tools that compliance officers use become increasingly important. They can shape the efficiency of compliance operations, the fairness of AI systems, and ultimately, the success of the organization in the digital age.

Key features to look for in a compliance platform include fairness, transparency, and adaptability.

Fairness refers to the ability of the platform to promote unbiased outcomes. The platform should provide mechanisms to check for and mitigate algorithmic bias, ensuring that AI systems operate in a fair and equitable manner. This includes mechanisms for testing and validating AI models and for interpreting their decisions.

Transparency in a platform is crucial for both internal operations and regulatory compliance. The platform should provide clear visibility into the workings of AI systems and the decision-making process. This can involve features that allow for easy tracking and reporting of compliance activities, as well as features that enable the interpretation and explanation of AI decisions.

Adaptability refers to the ability of the platform to keep pace with the rapidly evolving regulatory and technological landscape. It should be able to integrate new regulatory changes quickly and easily. Additionally, it should support the integration of new AI and machine learning technologies and their responsible use in compliance operations.

A platform equipped with these features can significantly aid compliance officers in understanding and combating algorithmic bias. It can automate routine tasks, freeing up time for compliance officers to focus on more strategic activities. It can provide insights into potential sources of bias and suggest ways to mitigate them. It can also facilitate the demonstration of regulatory compliance and the maintenance of ethical standards.

In essence, the right compliance platform can empower compliance officers to navigate the complex world of compliance with confidence and success. It can provide them with the tools they need to ensure fairness, uphold ethical standards, satisfy customers, and meet regulatory requirements—all while harnessing the power of AI and machine learning. Therefore, choosing the right platform becomes a strategic decision, with long-term implications for the organization's compliance operations and overall success.

Conclusion

In the face of algorithmic bias and complex compliance requirements, the right tool makes all the difference. Flagright offers just that – an AI-powered, centralized AML compliance and fraud prevention platform.

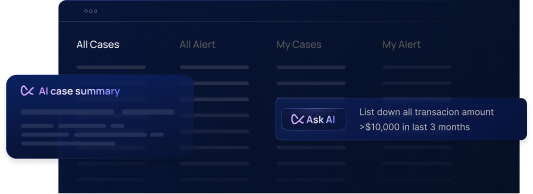

With features like real-time transaction monitoring, customer risk assessment, watchlist screening, AI forensics, Flagright provides robust compliance solutions that effectively combat algorithmic bias.

Further enhancing its suite of services, Flagright integrates with CRMs like Salesforce, Zendesk, and HubSpot, consolidating customer correspondence and enhancing operational efficiency.

Innovative solutions like the GPT-powered merchant monitoring, AI narrative writer, and suspicious activity report (SAR) generator save compliance officers invaluable time, ensuring efficient operations while maintaining compliance integrity. With Flagright, compliance officers can wrap up integrations within one week, providing them with valuable time to focus on core compliance responsibilities.

Flagright represents the future of fair, effective, and efficient compliance operations. By helping compliance officers understand and mitigate algorithmic bias, it sets a high standard in the digital age of finance.

Begin your journey towards fair, effective, and efficient compliance operations today. Leverage the power of AI and mitigate algorithmic bias with Flagright. Schedule a free demo with us!

.svg)

.webp)