AT A GLANCE

- The problem: AML teams are overwhelmed by alert volume, with false positives often exceeding 90%, leading to backlogs and analyst burnout.

- The root cause: Rules-based detection systems scale alerts faster than investigation capacity can handle.

- The risk: Growing backlogs increase regulatory exposure and the likelihood of missing real suspicious activity.

- The limitation of traditional fixes: Hiring more analysts does not solve the structural mismatch and is not cost-efficient.

- The solution: AI Forensics reduces investigation time by automating data gathering, analysis, and case preparation.

- The impact: Teams can clear backlogs faster, reduce false positives, and focus human effort on high-risk, complex cases.

- The advantage: Stronger AML controls, improved analyst retention, and scalable compliance without linear headcount growth.

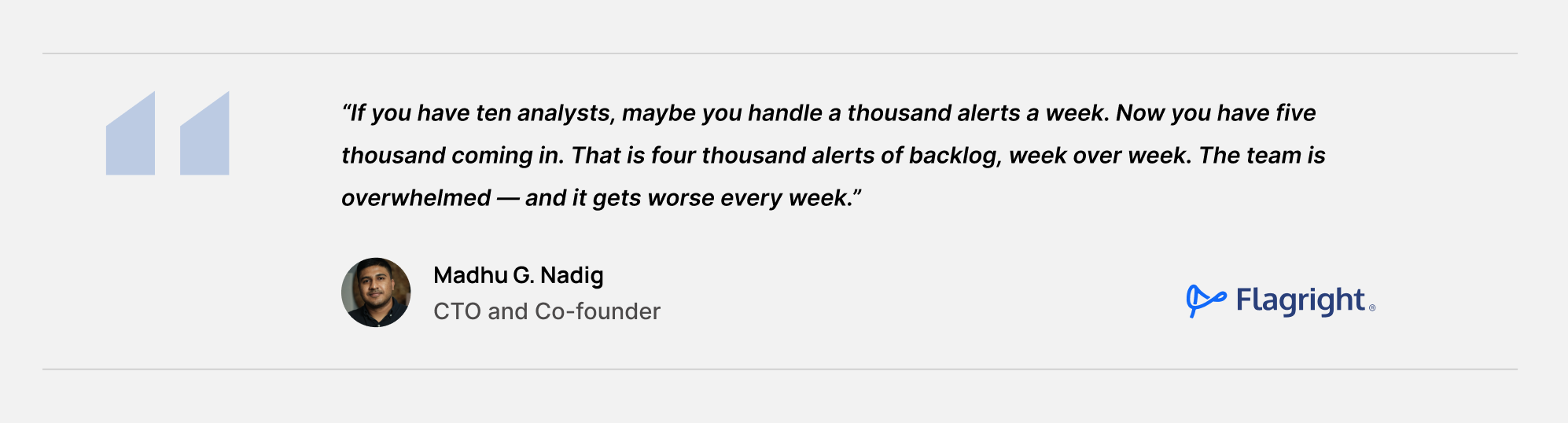

There is a conversation happening in compliance departments across the financial industry, and it tends to happen behind closed doors. The honest version sounds something like this: we are losing. Not to fraudsters (or not only to fraudsters) but to volume. We are generating more alerts than we can possibly work through, our backlogs are growing faster than we can clear them, and our most experienced analysts are burning out on work that should never have reached their desks in the first place.

Alert overload is not a new problem, but it is an accelerating one. And the traditional solution: hire more analysts, build bigger teams is no longer viable as a primary strategy.

Understanding the Mechanics of Alert Overload

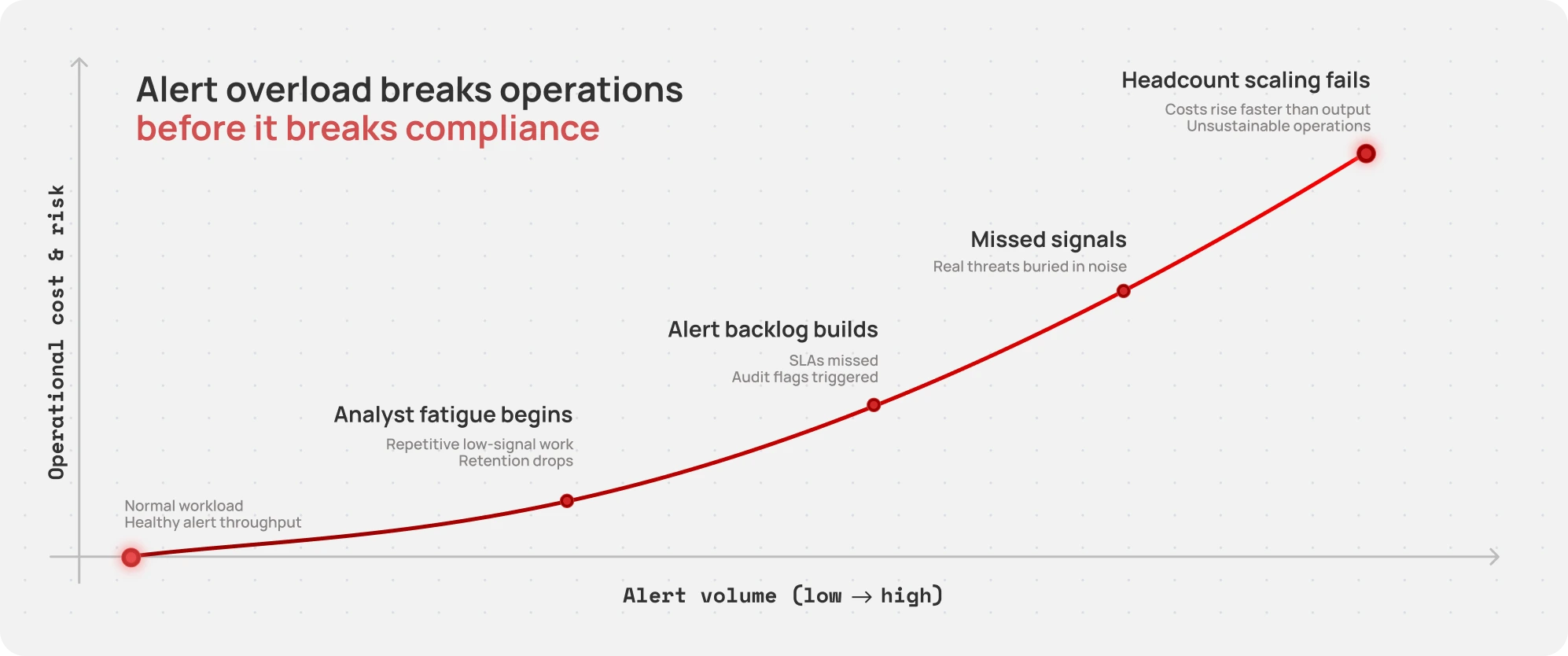

Alert overload happens when the volume of alerts requiring investigation exceeds the capacity of your investigative team to work through them at the required standard. The gap compounds over time: unresolved alerts become backlogs, backlogs create regulatory exposure, and the pressure to clear the backlog often leads to shortcuts that create their own risk.

The root cause is structural. Transaction monitoring and sanctions screening systems generate alerts based on rules and models that are necessarily broad; they are calibrated to catch suspicious activity, which means they will also catch a significant volume of activity that turns out to be benign. Industry estimates consistently put the false positive rate for AML alerts above 90 percent. That means the vast majority of the work your analysts do every day is investigation that concludes with a clean close.

That is not a failure of your team. It is a systemic mismatch between detection scale and investigation capacity. And it is getting worse as transaction volumes increase, real-time payment adoption accelerates, and regulatory requirements become more demanding.

What Alert Overload Actually Costs

Before getting to solutions, it is worth being specific about what alert overload actually costs, because the business case for addressing it is stronger than most executives realize.

- Talent attrition: Experienced compliance analysts are highly skilled professionals. When they spend the majority of their working hours on low-signal alert clearing, retention suffers. The institutional knowledge that walks out the door when a senior analyst leaves is difficult to quantify and even harder to replace.

- Regulatory exposure: Alert backlogs are a direct regulatory liability. Examiners who find large volumes of unworked or late-worked alerts will view this as a control deficiency, regardless of the reason.

- Missed signals: When teams are overwhelmed by volume, the risk of missing genuinely suspicious activity increases. The analyst who has worked through 80 alerts today is less likely to catch the subtle pattern in alert 81 than the analyst working at a sustainable pace.

- Hiring costs: The unit economics of adding analyst headcount to solve a volume problem are poor. You are hiring expensive, specialized talent to perform work that is, in most cases, procedural data gathering.

How Flagright AI Forensics Addresses Overload at the Root

AI Forensics attacks alert overload in two complementary ways, and compliance leaders can choose how aggressively to deploy each based on their institution's risk appetite and regulatory posture.

Approach One: Multiplying Analyst Capacity

Even before any alerts are automated, AI forensics can dramatically reduce the time it takes a human analyst to work through a case. Today, a typical Level 1 investigation requires the analyst to pull data from multiple systems, cross-reference external sources, apply the institution's SOP, document findings, and reach a disposition. That process takes five to fifteen minutes per alert under normal conditions.

With AI forensics in assisted mode, the agent handles all of the data gathering, cross-referencing, and initial analysis before the alert reaches the analyst's queue. By the time the analyst opens the case, the investigative groundwork is done. The agent has surfaced the relevant evidence, applied the SOP, and generated a recommended disposition with a full reasoning chain attached. The analyst reviews, exercises judgment, and closes, often in under a minute.

At that ratio, a team of ten analysts can handle what previously required a team of fifty. Existing headcount clears the backlog. Skilled professionals spend their time on decisions rather than data collection.

Approach Two: Autonomous Clearance of Low-Risk Queues

The second lever is deploying agents in full autonomy for defined categories of alerts. This is not the same as removing human oversight; it is repositioning it. Instead of a human reviewing every alert individually, governance happens at the program level: regular sampling of agent decisions, performance monitoring, drift detection, and escalation protocols that surface anything unusual.

A Practical Example: An institution with a backlog of 100,000 low-risk alerts would need a large dedicated team just to clear the backlog, before accounting for ongoing volume. With autonomous AI forensics deployed on that queue, the backlog can be cleared in minutes with every decision documented, reasoned, and auditable.

The key to making full autonomy work in a regulated environment is the governance framework that surrounds it. Agents must operate within a defined scope, aligned with internal risk appetite. Every autonomous action must be logged and explainable. Escalation paths must be clear and fast. Human review of edge cases must be built into the workflow. When these conditions are met, full autonomy is not a compliance risk; it is a compliance control.

The Human Dividend

The framing of "AI replacing analysts" misses the more important point. The institutions that deploy AI forensics most effectively are not shrinking their compliance teams. Instead, they are redirecting them.

When the routine, procedural volume is handled by agents, analysts are freed for the work that genuinely requires human judgment: complex multi-jurisdictional cases, novel typologies that fall outside established patterns, SAR drafting that requires narrative judgment, and cross-functional escalations that need experienced eyes. This is the work that develops skills, builds institutional knowledge, and actually requires the training and expertise that experienced analysts bring.

For talent retention, this matters. Compliance professionals who entered the field to investigate financial crime did not sign up to spend their days clearing obvious false positives. Giving them back their professional purpose is not a soft benefit; it is a retention strategy.

What to Look for When Evaluating AI Solutions

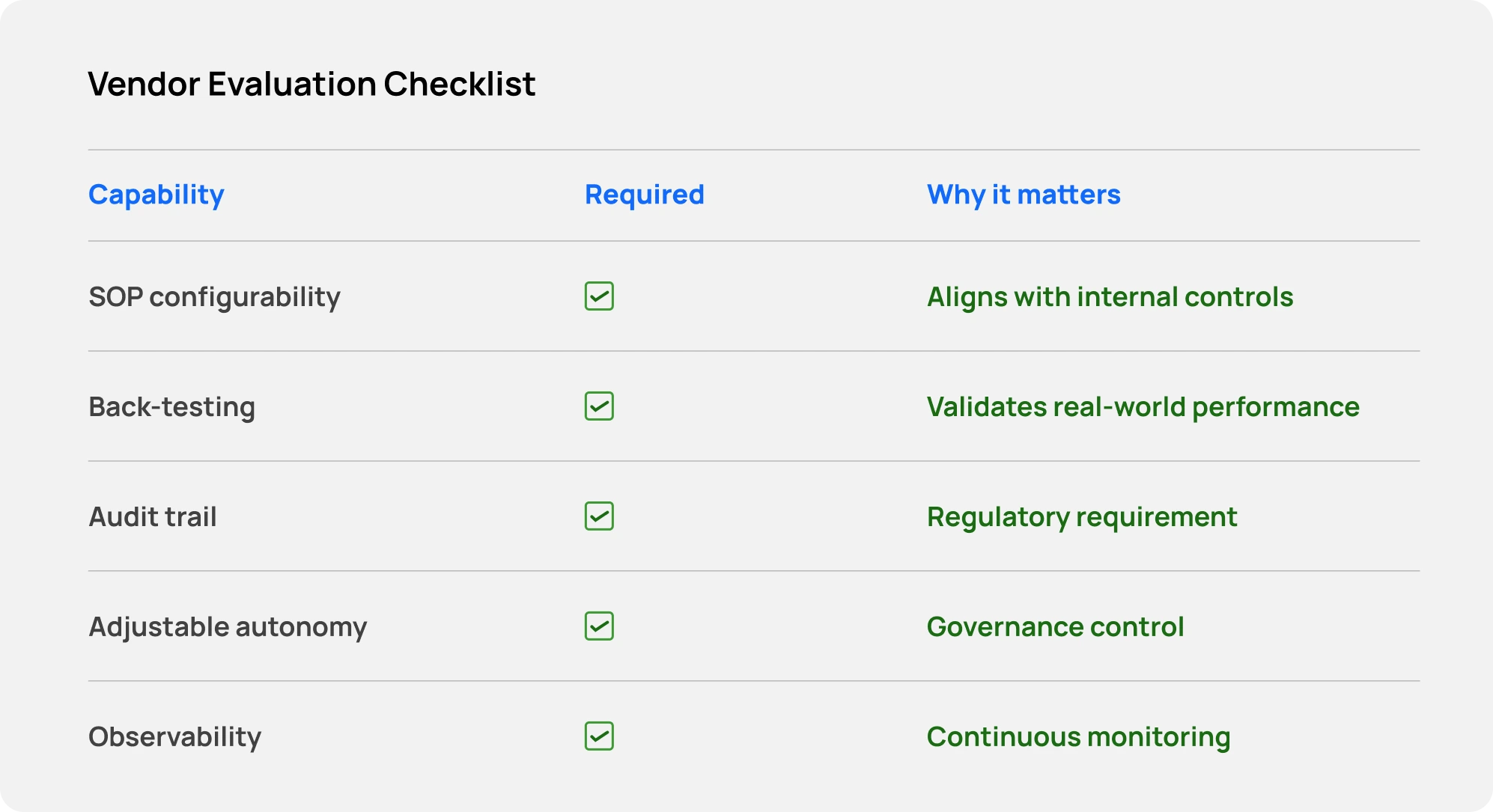

Not all agentic AI platforms are equally suited to compliance environments. For executives evaluating solutions, there are several non-negotiable requirements:

- SOP configurability: The system must execute your institution's procedures, not a generic version of them. Look for platforms where your SOPs drive agent configuration directly.

- Back-testing capability: Before deployment, you should be able to run agents against historical alert data and compare their decisions to actual analyst dispositions. This is the only way to validate performance in your specific environment.

- Full audit trail: Every agent decision must generate a complete, human-readable reasoning chain. Aggregate performance reporting is not a substitute for individual case explainability.

- Adjustable autonomy: The platform should support both assisted and autonomous modes, with governance controls that allow you to define scope, escalation thresholds, and review requirements precisely.

- Integrated observability: You need visibility into both the AI layer and the rules layer, with shared infrastructure so performance can be measured consistently across both.

The Compounding Advantage

Alert overload is a compounding problem. Every week that backlogs grow, the regulatory and operational pressure compounds. The cost of delay is not static; it increases over time.

The same is true of the solution. Institutions that deploy Flagright’s AI Forensics report up to 93% decrease in false positives, while building the institutional knowledge, regulatory trust, and operational muscle that will allow them to expand autonomy responsibly over time. Institutions that wait are building bigger backlogs.

For compliance executives, the question is not whether AI forensics will become standard in the industry. It will. The question is whether your institution will be among the early movers who shape how it is deployed, or among those who adopt it under pressure to catch up.

.svg)