AT A GLANCE

- AML is not a choice between rules and AI; it is an architecture problem.

- Rules remain essential for deterministic, regulator-mapped detection.

- Rules generate alerts, but cannot perform full investigations.

- AI Forensics works best downstream of rules, accelerating investigation and evidence gathering.

- Explainability, auditability, and traceability are non-negotiable in regulated environments.

- The winning model is hybrid: rules for detection, AI for investigation, humans for decisions.

For the last few years, much of the conversation around anti-money laundering technology has been framed as a choice: legacy rules-based systems on one side, modern AI-powered detection on the other. Legacy is slow and brittle, the argument goes. AI is fast and adaptive. The conclusion feels obvious.

That framing is wrong.

Banks, fintechs, and payment providers do not operate in a vacuum. They operate in a regulated environment where decisions must be explainable, controls must be auditable, and risk frameworks must hold up under independent scrutiny. The question is not whether to replace rules with AI. The question is how to combine deterministic controls, investigator judgment, and AI-driven analysis in a way that is both operationally effective and regulator-ready.

That distinction is not semantic. It shapes every major architecture decision a compliance leader will make in the next three to five years.

The Federal Reserve's SR 11-7 guidance requires robust development, effective validation, and sound governance for models — including "effective challenge" of model outputs. The OCC's Model Risk Management handbook explicitly extends these expectations to AI and machine learning used for fraud prevention and BSA/AML monitoring, noting that risk management should be commensurate with the risk of the function the AI supports.

That is why the future of AML is not rules or AI. It is rules and AI.

The Case for Rules: Don't Discard What Works

Rules remain foundational to AML compliance, not because the industry has failed to modernize, but because some obligations are inherently deterministic, and deterministic obligations are exactly what rules are designed to handle.

Take currency reporting. FinCEN requires financial institutions to report cash transactions above $10,000, including multiple same-day transactions that aggregate above that threshold. FinCEN also makes clear that structuring, breaking transactions into smaller amounts specifically to evade that requirement, is unlawful. These are not ambiguous requirements. They are explicit, well-defined, and directly codifiable as control logic.

For requirements like these, rules are not a legacy workaround. This is exactly where rule-based systems are strongest. A rule can be explicit: flag activity over a threshold, detect repeated behavior below a threshold, or surface patterns tied to known typologies. That kind of logic is fast, stable, transparent, and directly traceable to a regulatory mandate. It does exactly what it is told to do and in a regulated environment, that predictability is a feature, not a limitation.

This is also consistent with the broader regulatory posture. FATF's risk-based approach guidance does not prescribe a single compliance model for every institution. It emphasizes an effective, tailored approach aligned to an institution's specific risk profile and operating context. A well-governed rules layer is often the clearest operational expression of that baseline discipline; something auditors and examiners can trace, test, and verify directly.

Where Rules Begin to Break Down

The problem with rules is not that they are obsolete. It is that they are limited by design and the patterns that define the most serious financial crime today consistently exceed those limits.

Rules perform well when behavior is legible and logic can be expressed cleanly as a condition. They fail when investigators need to reason across multiple entities, accounts, counterparties, and time periods; when the signal is not a threshold breach but a pattern, a behavioral shift, or a subtle combination of factors that individually look benign.

Consider a structuring investigation. A rule can flag deposits just below a reporting threshold. But a credible investigation requires much more: customer transaction history, connected accounts, counterparty behavior, prior cases, adverse media, watchlist context, and any number of additional signals before an analyst can determine whether this alert is noise or something that warrants escalation. Rules are capable of generating alerts, but they are not capable of performing end-to-end judgment-rich investigation on their own.

As Madhu puts it directly in the interview: rules can generate alerts for subtle or layered activity but they cannot investigate them.

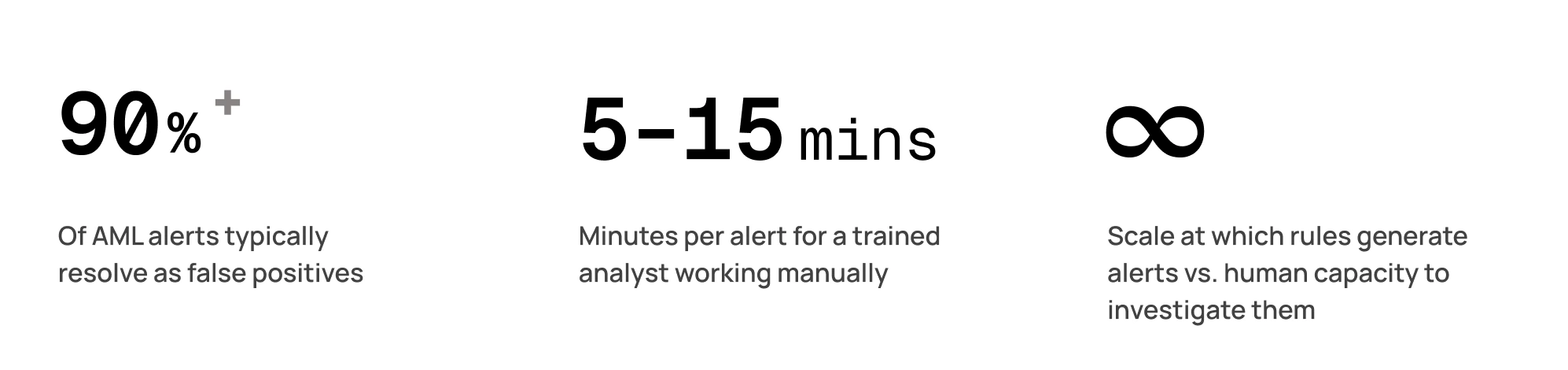

That investigative gap is where most AML programs lose ground. Alert volumes grow. Investigation queues lengthen. Analysts spend the majority of their time on cases that resolve to nothing, and the cases that actually matter get less attention than they deserve.

Why AI Belongs Downstream of Rules, Not Instead of Them

The strongest use case for AI in AML is not replacing deterministic detection. It is accelerating investigation after detection has already occurred.

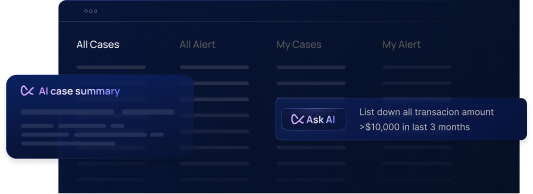

That is the operating model for Flagright. Rules continue doing what they do best: generating alerts based on transparent control logic tied directly to regulatory requirements. AI then takes on the work that currently consumes the most analyst time: gathering evidence across multiple systems, summarizing context, applying investigation procedures, cross-referencing signals, and helping teams reach defensible dispositions faster and more consistently.

This matters because the operational bottleneck in many compliance teams is not “can we generate more alerts?” It is “can we investigate what we already have, at the required speed and standard, without burning out the team that knows how to do it?"

This architecture also aligns more cleanly with supervisory expectations. SR 11-7 notes that model risk grows with complexity, assumptions, extent of use, and the consequences of model failure. Deploying AI as a black-box replacement for core control logic creates significant governance exposure. But if AI is used in a bounded, observable, reviewable investigative role downstream of explainable detection triggers, institutions can keep the control architecture intact and makes the AI component much easier to validate, monitor, and defend.

That is a much more practical architecture for real compliance teams.

Explainability Is Non-Negotiable

One of the most common mistakes in compliance AI discussions is treating accuracy as the primary standard. It is not, or at least, it is not sufficient on its own.

In financial crime compliance, outputs must be defensible. Examiners do not evaluate whether an AI recommendation was statistically accurate in aggregate. They evaluate whether a specific decision can be explained, traced, and justified against the institution's own procedures and the applicable regulatory framework.

Madhu expresses this directly in the interview: if an institution cannot trust an AI's output, cannot explain how it reached a conclusion, and cannot demonstrate that to an examiner, the AI has no place in compliance. That is not a conservative position; it is the operationally correct one.

For AI to function as a genuine compliance control, institutions need traceability at every step:

- What triggered the case — the specific rule, threshold, or signal that generated the alert

- What evidence was gathered — the data sources consulted and findings surfaced during investigation

- What procedure was applied — the SOP steps followed, in sequence, by the AI agent

- Why the recommendation was made — the reasoning chain connecting evidence to disposition

- Where human judgment entered (if applicable) — the review, override, or escalation decision made by the analyst

Without this chain, AI becomes a governance liability rather than an operational advantage.

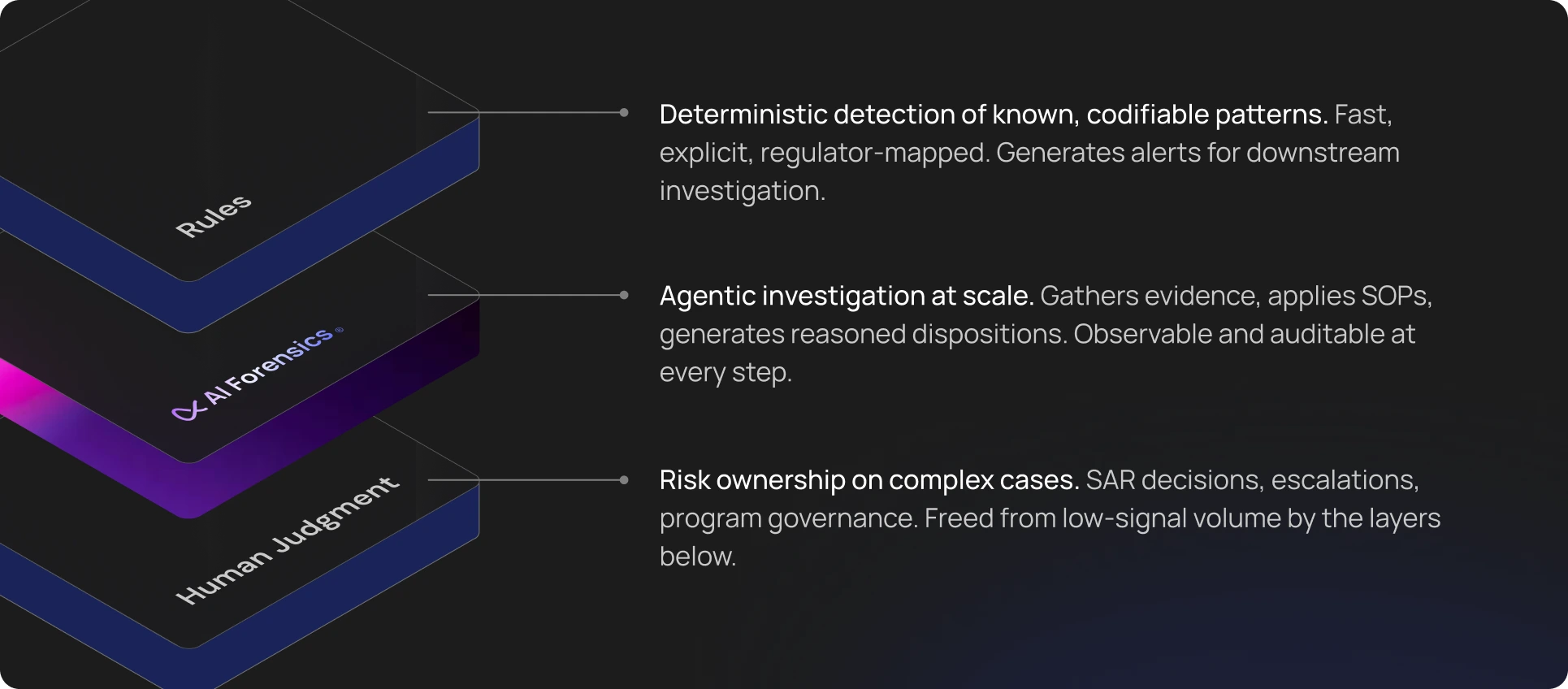

The Architecture That Holds Up

The future of AML compliance is hybrid by design; not as a compromise, but as the architecture most likely to survive both operational scale and regulatory scrutiny.

Rules remain essential for clear, auditable, regulator-linked detection. Human investigators remain essential for high-stakes judgment, escalation decisions, and risk ownership. AI becomes the force multiplier in the middle: the layer that helps institutions investigate faster, clear low-signal work more efficiently, and apply procedures more consistently at scale.

This is also the most realistic way to think about autonomy. Human-in-the-loop oversight is the default: AI recommends, humans decide. As agents demonstrate consistent, well-reasoned performance on back-tested data and live cases, some low-risk queues may eventually be suitable for more automation, while higher-risk investigations should remain human-led. This is how customers increasingly think about deployment: human-in-the-loop by default, with more autonomy introduced selectively for low-risk, high-volume work as trust is established.

This is also the most credible path with regulators than the usual “AI will replace analysts” narrative. Institutions that approach AI adoption gradually with documentation, back-testing, and clear governance at each stage build programs that examiners can follow and trust. Institutions that treat AI as a wholesale replacement for existing controls tend to create examination problems they did not anticipate.

The Strategic Takeaway

AML leaders do not face a binary choice between legacy rules and modern AI. They face a design challenge: building a program where every layer handles the work it is actually best suited for, and where the whole is more defensible than the sum of its parts.

Rules handle what is clearly codifiable. AI investigates what is too manual to scale. Humans decide what carries material risk.

That is not a conservative compromise. It is the architecture that performs under operational pressure, holds up under regulatory examination, and gives compliance teams the capacity to focus their expertise where it actually matters.

At Flagright, this is the thesis behind combining AI Forensics with traditional controls as an integrated program, not as competing models. The goal is not to replace explainable systems with opaque ones. It is to preserve what works, solve the investigation bottleneck that has been slowing teams down for years, and build compliance programs that scale without sacrificing the governance standards that protect the institution.

That is the conversation the industry should be having now.

Book a demo to seen how Flagright combines AI Forensics with risk-based rules engine to achieve 93% decrease in false positives.

.svg)